Sun, 22 December 2019

We dig into what it takes to make a maintainable application as we continue to learn from Designing Data-Intensive Applications, as Allen is a big fan of baby Yoda, Michael’s index isn’t corrupt, and Joe has some latency issues. In case you’re reading this via your podcast player, this episode’s full show notes can be found at https://www.codingblocks.net/episode122 where you can join in on the conversation. Sponsors - Educative.io – Level up your coding skills, quickly and efficiently. Visit educative.io/codingblocks to get 20% off any course or, for a limited time, get 50% off an annual subscription.

- ABOUT YOU – One of the fastest growing e-commerce companies headquartered in Hamburg, Germany that is growing fast and looking for motivated team members like you. Apply now at aboutyou.com/job.

Survey Says News - Thank you for taking a moment out of your day to leave us a review:

- iTunes: Kodestar, CrouchingProbeHiddenCannon

- Stitcher: programticalpragrammerer, Patricio Page, TheOtherOtherJZ, Luke Garrigan

- Be careful about sharing/using code to/from Stack Overflow! You need to be aware of the licensing and what it might mean for your application.

- What is the license for the content I post? (Stack Overflow)

- Attribution-ShareAlike 4.0 International (CC BY-SA 4.0) (Creative Commons)

- Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0) (tldrlegal.com)

- We Still Don’t Understand Open Source Licensing (episode 5)

- Get your tickets now for NDC { London } for your chance to kick Allen in the shins where he will be giving his talk Big Data Analytics in Near-Real-Time with Apache Kafka Streams. (ndc-london.com)

Maintainability - A majority of cost in software is maintaining it, not creating it in the first place.

- Coincidentally most people dislike working on these legacy systems possibly due to one or more of the following:

- Bad code,

- Outdated platforms, and/or

- Made to do things the system wasn’t designed to do.

- We SHOULD build applications to minimize the pain during the maintenance phase which involves using the following design principals:

- Operability – make it easy to keep the system running smoothly.

- Simplicity – make it easy for new developers to pick up and understand what was created, remove complexity from the system.

- Evolvability – make it easy for developers to extend, modify, and enhance the system.

Operability - Good operations can overcome bad software, but great software cannot overcome bad operations.

- Operations teams are vital for making software run properly.

- Responsibilities include:

- Monitoring and restoring service if the system goes into a bad state.

- Tracking down the problems.

- Keeping the system updated and patched.

- Keeping track of how systems impact each other.

- Anticipating and planning for future problems, such as scale and/or capacity.

- Establish good practices for deployments, configuration management, etc.

- Doing complicated maintenance tasks, such as migrating from one platform to another.

- Maintaining security.

- Making processes and operations predictable for stability.

- Keeping knowledge of systems in the business even as people come and go. No tribal knowledge.

- The whole point of this is to make mundane tasks easy allowing the operations teams to focus on higher value activities.

- This is where data systems come in.

- Get visibility into running systems, i.e. monitoring.

- Supporting automation and integration.

- Avoiding dependencies on specific machines.

- Documenting easy to understand operational models, for example, if you do this action, then this will happen.

- Giving good default behavior with the ability to override settings.

- Self-healing when possible but also manually controllable.

- Predictable behavior.

Simplicity - As projects grow, they tend to get much more complicated over time.

- This slows down development as it takes longer to make changes.

- If you’re not careful, it can become a big ball of mud.

- Indicators of complexity:

- Explosion of state space,

- Tight coupling,

- Spaghetti of dependencies,

- Inconsistent coding standards such as naming and terminology,

- Hacking in performance improvements,

- Code to handle one off edge cases sprinkled throughout.

- Greater risk of introducing bugs.

- Reducing complexity improves maintainability of software.

- For this reason alone, we should strive to make our systems simpler to understand.

- Reducing complexity DOES NOT mean removing or reducing functionality.

Accidental complexity – Complexity that is accidental if it is not inherent in the problem that the software solves, but arises only from the implementation. Ben Moseley and Peter Marks, Out of the Tar Pit How to remove accidental complexity? - Abstraction

- Allows you to hide implementation details behind a facade.

- Also allows you to reduce duplication as abstractions can allow for reuse among many implementations.

- Examples of good abstractions:

- Programming languages abstract system level architectures such as CPU, RAM registers, etc.

- SQL is an abstraction over complex memory and data structures.

- Finding good abstractions is VERY HARD.

- This has become apparent with distributed systems as these abstractions are still being explored.

Evolvability - It’s VERY likely that your system will need to undergo changes as time moves on.

- Agile methods allow you to adapt to change. Some methods include:

- Test Driven Development

- Refactoring

- How easy you can modify a data system is closely linked to how simple the system is.

- Rather than calling it agility, the book refers to it as evolvability.

Resources We Like - Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems by Martin Kleppmann (Amazon)

- Grokking the System Design Interview (Educative.io)

- All permutations of English letters with a handy search feature (libraryofbabel.info)

- What is the license for the content I post? (Stack Overflow)

- Attribution-ShareAlike 4.0 International (CC BY-SA 4.0) (Creative Commons)

- Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0) (tldrlegal.com)

- We Still Don’t Understand Open Source Licensing (episode 5)

- Streaming process output to a browser, with SignalR and C# (Coding Blocks, dev.to)

- Googlewhack – A Google query using two words, without quotes, that returns a single search result. (Wikipedia)

- The Website is Down – Sales Guy vs. Web Dude (YouTube – Explicit)

- Software Design Anti-patterns (episode 65)

- Clean Architecture – How to Quantify Component Coupling (episode 72)

- Static Analysis w/ NDepends – How good is your code? (episode 15)

- Static Analysis of Open Source .NET Projects (Coding Blocks)

- Does Facebook still use PHP? (Quora)

Tip of the Week - Need to move data from here to there? Check out Fluentd. (fluentd.org)

- Use NUnit’s

Assert.Multiple to assert that multiple conditions are all met. (GitHub) - Turns out C#’s

where generic type constraints are even cooler than you might have realized. (docs.microsoft.com) - Best Practices for Response Times and Latency (GitHub)

Direct download: coding-blocks-episode-122.mp3

Category: Software Development

-- posted at: 8:33pm EDT

|

|

Sun, 8 December 2019

We continue to study the teachings of Designing Data-Intensive Applications, while Michael’s favorite book series might be the Twilight series, Joe blames his squeak toy chewing habit on his dogs, and Allen might be a Belieber. Head over to https://www.codingblocks.net/episode121 to find this episode’s full show notes and join in the conversation, in case you’re reading these show notes via your podcast player. Sponsors - Educative.io – Level up your coding skills, quickly and efficiently. Visit educative.io/codingblocks to get 20% off any course or, for a limited time, get 50% off an annual subscription.

Survey Says News - So many new reviews to be thankful for! Thanks for making it a point to share your review with us:

- iTunes: bobby_richard, SeanNeedsNewGlasses, Teshiwyn, vasul007, The Jin John

- Stitcher: HotReloadJalapeño, Leonieke, Anonymous, Juke0815

- Joe was a guest on The Waffling Taylors. Check out the entire three episode series: Squidge The Ring, Jay vs Doors, and Exploding Horses.

- Be aware of “free” VPNs.

- This iOS Security App Shares User Data With China: 8 Million Americans Impacted (Forbes)

- Facebook shuts down Onavo VPN app following privacy scandal (Engadget)

- Allen will be speaking at NDC { London } where he will be giving his talk Big Data Analytics in Near-Real-Time with Apache Kafka Streams. Be sure to stop by for your chance to kick him in the shins! (ndc-london.com)

Scalability - Increased load is a common reason for degradation of reliability.

- Scalability is the term used to describe a system’s ability to cope with increased load.

- It can be tempting to say X scales, or doesn’t, but referring to a system as “scalable” really means how good your options are.

- Couple questions to ask of your system:

- If the system grows in a particular way (more data, more users, more usage), what are our options for coping?

- How easy is it to add computing resources?

Describing Load - Need to figure out how to describe load before before we can truly answer any questions.

- “Load Parameters” are the metrics that make sense to measure for your system.

- Load Parameters are a measure of stress, not of performance.

- Different parameters may matter more for your system.

- Examples for particular uses may include:

- Web Server: requests per second

- Database: read/write ratio

- Application: # of simultaneous active users

- Cache: hit/miss ratio

- This may not be a simple number either. Sometimes you may care more about the average number, sometimes only the extremes.

Describing Performance - Two ways to look at describing performance:

- How does performance change when you increase a load parameter, without changing resources?

- How much do you need to increase resources to maintain performance when increasing a load parameter?

- Performance numbers measure how well your system is responding.

- Examples can include:

- Throughput (records processed per second)

- Response time

Latency vs Response Time - Latency is how long a request is waiting to be handled, awaiting service.

- Response time is the total time it takes for the client to see the response, including any latency.

What do you mean by “numbers”? - Performance numbers are generally a set of numbers: i.e. minimum, maximum, average, median, percentile.

- Sometimes the outliers are really important.

- For example, a game may have an average of 59 FPS but that number might drop to 10 FPS when garbage collection is running.

- Average may not be your best measure if you want to see the typical response time.

- For this reason it’s better to use percentiles.

- Consider the median (sort all the response times and the one in the middle is the median). Median is known as the 50th percentile or P50.

- That means that half of your response times will be under the 50% mark and half will be over.

- To find the outliers, you can look at the 95th, 99th and 99.9th percentiles, aka P95, P99, P999.

- If you were to look at the P95 mark and the response time is 2s, then that means that 95% of all requests come back in under 2 seconds and 5% of all requests come back in over 2s.

- The example provided is that Amazon describes response times in the P999 even though it only affects 1 in 1000 users. The reason? Because the slowest response times would be for the customers who’ve made the most purchases. In other words, the most valued customers!

- Increased response times have been measured by many large companies in regards to completions of orders, leaving sites, etc.

- Ultimately, trying to optimize for P999 is very expensive and may not make the investment worth it.

- Not only is it expensive but it’s also difficult because you may be fighting environmental things outside of your control.

- Percentiles are often used in:

- SLO’s – Service Level Objectives

- SLA’s – Service Level Agreements

- Both are contracts that draw out the expected performance and availability of a service.

- Queuing delays are a big part of response times in the higher percentiles.

- Servers can only process a finite amount of things in parallel and the rest of the requests are queued.

- A relatively small number of requests could be responsible for slowing many things down.

- Known as head-of-the-line blocking.

- For this reason – it’s important to make sure you’re measuring client side response times to make sure you’re getting the full picture.

- In load testing, the client application needs to make sure it’s issuing new requests even if it’s waiting for older ones. This will more realistically mimic the real world.

- For applications that make multiple service calls to complete a screen or page, slower response times become very critical because typically the user experience is not good even if you’re just waiting for one out of 20 requests. The slowest offender is typically what determines the user experience.

- Compounding slow requests is known as tail latency amplification.

- Monitoring response times can be very helpful, but also dangerous.

- If you’re trying to calculate the real response time averages against an entire set of data every minute, the calculations can be very expensive.

- There are approximation algorithms that are much more efficient, such as forward decay, t-digest, or HdrHistogram.

- Averaging percentiles is meaningless. Rather you need to add the histograms.

Coping with Load How do we retain good performance when our load increases? - An application that was designed for 1,000 concurrent users will likely not handle 10,000 concurrent users very well.

- It is often necessary to rethink your architecture every time load is increased significantly.

- The options, simplified:

- Scaling up – adding more hardware resources to a single machine to handle additional load.

- Scaling out – adding more machines to handle additional load.

- One is not necessarily better than the other.

- Scaling up can be much simpler and easier to maintain, but there is a limit to the power available on a single machine as well as the cost ramifications of creating an uber-powerful single machine.

- Scaling out can be much cheaper in hardware costs but cost more in developer time and maintenance to make sure everything is running as expected

- Rather than picking one over the other, consider when each makes the most sense.

- Elasticity is when a system can dynamically resize itself based on load, i.e. adding more machines as necessary, etc.

- Some of this happens automatically based on some load detecting criteria.

- Some of this happens manually by a person.

- Manually may be simpler and can protect against unexpected massive scaling that may hit the wallet hard.

- For a long time, RDBMS’s typically ran on a single machine. Even with a failover, the load wasn’t typically distributed.

- As distributed systems are becoming more common and the abstractions are being better built, things like this are changing, and likely to change even more.

“[T]here is no such thing as a generic, one-size-fits-all scalable architecture …” Martin Kleppmann - Problems could be reads, writes, volume of data, complexity, etc.

- A system that handles 100,000 requests per second at 1 KB in size is very different from a system that handles 3 requests per minute with each file size being 2 GB. Same throughput, but much different requirements.

- Designing a scalable system based off of bad assumptions can be both wasted time and even worse counterproductive.

- In early stage applications, it’s often much more important to be able to iterate quickly on the application than to design for an unknown future load.

Resources We Like - Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems by Martin Kleppmann (Amazon)

- Grokking the System Design Interview (Educative.io)

- HdrHistogram: A High Dynamic Range Histogram (hdrhistogram.org)

- Performance (Stack Exchange)

- AWS Snowmobile (aws.amazon.com)

- Monitoring Containerized Application Health with Docker (Pluralsight)

Tip of the Week - Get back into slack: Instead of typing in your slack room name, just click the “Find your workspace” link and enter your email – it’ll send you all of the workspaces linked to that email.

- Forget everything Michael said about CodeDOM in episode 112. Just use Roslyn. (GitHub)

- The holidays are the perfect time for Advent of Code. (adventofcode.com)

Direct download: coding-blocks-episode-121.mp3

Category: Software Development

-- posted at: 10:58pm EDT

|

|

Mon, 25 November 2019

We start our deep dive into Joe’s favorite new book, Designing Data-Intensive Applications as Joe can’t be stopped while running downhill, Michael might have a new spin on #fartgate, and Allen doesn’t quite have a dozen tips this episode. If you’re reading this via your podcast player, you can always go to https://www.codingblocks.net/episode120 to read these show notes on a larger screen and participate in the conversation. Sponsors Survey Says What is the single most important piece of your battlestation? News - Thank you to those that took time out of their day to leave us a review:

- Stitcher: Anonymous, jeoffman

- How to get started with a SQL Server database using Docker:

- SQL Server Tips – Run in Docker and an Amazing SSMS Tip (YouTube)

- Sample SQL Server Database and Docker (YouTube)

- Come see Allen at NDC { London } for your chance to kick him in the shins, where he will be giving his talk Big Data Analytics in Near-Real-Time with Apache Kafka Streams. (ndc-london.com)

- John Deere – Customer Showcase: Perform Real-time ETL from IoT Devices into your Data Lake with Amazon Kinesis (YouTube)

- There’s a new SSD sheriff in town and it’s the Seagate Firecuda 520 with a reported maximum 5,000 MB/s sequential reads and 4,400 MB/s sequential writes!!! (Amazon)

- Seagate Firecuda 520 1TB NVMe PCIe Gen4 M.2 SSD Review (TweakTown)

- Get 40% off your Pluralsight subscription! (Pluralsight)

- Joe was a guest on The Waffling Taylors, episode 59. (wafflingtaylors.rocks)

Designing Data-Intensive Applications About this book What is a data-intensive application per the book? Any application whose primary challenge is: - The quantity of data.

- The complexity of the data.

- The speed at which the data is changing.

That’s in contrast to applications that are compute intensive. Buzzwords that seem to be synonymous with data-intensive - NoSQL

- Message queues

- Caches

- Search indexes

- Batch / stream processing

This book is … This book is NOT a tutorial on how to do data-intensive applications with a particular toolset or pure theory. What the book IS: - A study of successful data systems.

- A look into the tools / technologies that enable these data intensive systems to perform, scale, and be reliable in production environments

- Examining their algorithms and the trade-offs they made.

Why read this book? The goal is that by going through this, you will be able to understand what’s available and why you would use various methods, algorithms, and technologies. While this book is geared towards software engineers/architects and their managers, it will especially appeal to those that: - Want to learn how to make systems scalable.

- Need to learn how to make applications highly available.

- Want to learn how to make systems easier to maintain.

- Are just curious how these things work.

“[B]uilding for scale that you don’t need is wasted effort and may lock you into an inflexible design.” Martin Kleppmann - While building for scale can be a form of premature optimization, it is important to choose the right tool for the job and knowing these tools and their strengths and weaknesses can help you make better informed decisions.

Most of the book covers what is known as “big data” but the author doesn’t like that term for good reason: “Big data” is too vague. Big data to one person is small data to someone else. Instead, single node vs distributed systems are the types of language used in the book. The book is also heavily biased towards FOSS (Free Open Source Software) because it’s possible to dig in and see what’s actually going on. Are we living in the golden age of data? - There are an insane number of really good database systems.

- The cloud has made things easy for a while, but tools like K8s, Docker, and Serverless are making things even easier.

- Tons of machine learning services taking care of common use cases, and lowering the barrier to entry: NLP, STT, and Sentiment analysis for example.

Reliability - Most applications today are data-intensive rather than compute-intensive.

- CPU’s are usually not the bottleneck in modern day applications – size, complexity, and the dynamic nature of data are.

- Most of these applications have similar needs:

- Store data so the application can find it again later.

- Cache expensive operations so they’ll be faster next time.

- Allow user searches.

- Sending messages to other processes – stream processing.

- Process chunks of data at intervals – batch processing.

- Designing data-intensive applications involve answering a lot of questions:

- How do you ensure the data is correct and complete even when something goes wrong?

- How do you provide good performance to clients even as parts of your system are struggling?

- How do you scale to increase load?

- How do you create a good API?

While reading this book, think about the systems that you use: How do they rate in terms of reliability, scalability, and maintainability? What does it mean for your application to be reliable? - The application performs as expected.

- The application can tolerate a user mistake or misuse.

- The performance is good enough for the expected use case.

- The system prevents any unauthorized access or abuse.

So in short – the application works correctly even when things go wrong. When things go wrong, they’re called “faults”. - Systems that are designed to work even when there are faults are called fault-tolerant or resilient.

Faults are NOT the same as failures: a fault did something not to spec, a failure means a service is unavailable. - The goal is to reduce the possibility of a fault causing a failure.

- It may be beneficial to introduce or ramp up the number of faults thrown at a system to make sure the system can handle them properly.

- You’re basically continually testing your resiliency.

- Netflix’s Chaos Monkey is an example.

- The book prefers tolerating faults over preventing them (except in case of things like security), and is mostly aimed at building a system that is self healing or curable.

Hardware Faults Typically, hardware failures are solved by adding redundancies: - Dual power supplies,

- RAID configuration,

- Hot swappable CPUs or other components.

As time has marched on, single machine resiliency has been deprioritized in favor of elasticity, i.e. the ability to scale up / down more machines. As a result, systems are now being built to be fault tolerant of machine loss. Software Errors Software errors usually happen by some weird event that was not planned for and can be more difficult to track down than hardware errors. Examples include: - Runaway processes can use up shared resources,

- Services slow down,

- Cascading failures that trigger a fault(s) in another component(s).

Human Errors Humans can be the least reliable part of any system. So, how can we make systems reliable in spite of our best efforts to crash them? - Good UI’s, APIs, etc.

- Create fully featured sandbox environments where people can explore safely.

- Test thoroughly.

- Allow for fast rollbacks in case of problems.

- Amazing monitoring.

- Good training / practices.

How important is reliability? - Obviously there are some situations where reliability is super important (e.g. nuclear power plant).

- Other times we might choose to sacrifice reliability to reduce cost.

- But most importantly, make sure that’s a conscious choice!

Resources We Like - Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems by Martin Kleppmann (Amazon)

- Stefan Scherer has a Docker image for all your Windows needs (hub.docker.com)

- Aspectacular with Vlad Hrybok – You down with AOP? (episode 9)

- Grokking the System Design Interview (Educative.io)

- Designing a URL Shortening service like TinyURL (Educative.io)

- Looking for inspiration for your battlestation? Check out r/battlestations! (Reddit)

- Chaos Monkey by Netflix (GitHub)

- Pokemon Sword and Shield Are Crashing Roku Devices (GameRant)

- #140 The Roman Mars Mazda Virus by Reply All (Gimlet Media)

- Troubleshoot .NET apps with auto-correlated traces and logs (Datadog)

- Your hard drives and noise:

- Shouting at disks causes latency (Reddit, YouTube)

- A Loud Sound Just Shut Down a Bank’s Data Center for 10 Hours (Vice)

- Beware: Loud Noise Can Cause Data Loss on Hard Drives (Ontrack)

- Backblaze Hard Drive Stats Q2 2019 (Backblaze)

- Failure Trends in a Large Disk Drive Population (static.googleusercontent.com)

Tip of the Week - When using NUnit and parameterized tests, you should prefer

IEnumerable<TestCastData> over something like IEnumerable<MyFancyObject> because TestCastData includes methods like .Explicit() giving you more control over each test case parameter. (NUnit wiki on GitHub) - Not sure which DB engine meets your needs? Check out db-engines.com.

- Search your command history with

CTRL+R in Cmder or Terminal (and possibly other shells). Continue pressing CTRL+R to “scroll” through the history that matches your current search. - Forgo the KVM. Use Mouse without Borders instead. (Microsoft)

- Synergy – The Mac OS X version that Michael was referring to. Also works across Windows and Linux. (Synergy)

- usql – A universal command-line interface for PostgreSQL, MySQL, Oracle, SQLite3, SQL Server, and others. (GitHub)

Direct download: coding-blocks-episode-120.mp3

Category: Software Development

-- posted at: 3:01am EDT

|

|

Mon, 11 November 2019

We discuss this year's shopping spree only to learn that Michael spent too much, Allen spent too much, and Joe spent too much.

Direct download: coding-blocks-episode-119.mp3

Category: Software Development

-- posted at: 8:01pm EDT

|

|

Sun, 27 October 2019

We debate whether DevOps is a job title or a job responsibility as Michael finally understands dev.to’s name, Allen is an infosec expert, and Joe wears his sunglasses at night. If you aren’t already viewing this episode’s show notes in your browser, you can find these show notes at https://www.codingblocks.net/episode118 and join the conversation. Sponsors - Datadog.com/codingblocks – Sign up today for a free 14 day trial and get a free Datadog t-shirt after creating your first dashboard.

- WayScript – Sign up and build cloud hosted tools that seamlessly integrate and automate your tasks.

- Educative.io – Level up your coding skills, quickly and efficiently. Visit educative.io/codingblocks to get 20% off any course.

Survey Says … Is DevOps a ... Take the survey here: https://www.codingblocks.net/episode118. News - We appreciate everyone that took a moment to leave us a review and say thanks:

- iTunes: kevo_ker, Cheiss, MathewSomers

- Stitcher: BlockedTicket

Is DevOps a Job Title or Company Culture? - What is DevOps?

- What isn’t DevOps?

- How do you learn DevOps?

- How mature is your DevOps?

- The myths of DevOps …

Resources We Like - What Is DevOps? (New Relic)

- The Phoenix Project: A Novel about IT, DevOps, and Helping Your Business Win (Amazon)

- The DevOps Handbook: How to Create World-Class Agility, Reliability, and Security in Technology Organizations (Amazon)

- The Unicorn Project: A Novel about Developers, Digital Disruption, and Thriving in the Age of Data (Amazon)

- DevOps is a culture, not a role! (Medium)

- Why is kubernetes source code an order of magnitude larger than other container orchestrators? (Stack Overflow)

- Welcoming Molly – The DEV Team’s First Lead SRE! (dev.to, Molly Struve)

- The DevOps Checklist (devopschecklist.com)

- Vagrant (vagrantup.com)

Tip of the Week - Edit your last Slack message by pressing the UP arrow key. That and more keyboard shortcuts available in the Slack Help Center. (Slack)

- Integrate Linux Commands into Windows with PowerShell and the Windows Subsystem for Linux (devblogs.microsoft.com)

- Use

readlink to see where a symlink ultimately lands. (manpages.ubuntu.com) - What are Durable Functions? (docs.microsoft.com)

- Change your Cmder theme to Allen’s favorite: Babun. (cmder.net)

- My favourite Git commit (fatbusinessman.com)

Direct download: coding-blocks-episode-118.mp3

Category: Software Development

-- posted at: 11:39pm EDT

|

|

Sun, 13 October 2019

We take an introspective look into what’s wrong with Michael’s life, Allen keeps taking us down random tangents, and Joe misses the chance for the perfect joke as we wrap up our deep dive into Hasura’s 3factor app architecture pattern. For those reading these show notes via their podcast player, this episode’s full show notes can be found at https://www.codingblocks.net/episode117. Sponsors - Datadog.com/codingblocks – Sign up today for a free 14 day trial and get a free Datadog t-shirt after creating your first dashboard.

- O’Reilly Software Architecture Conference – Microservices, domain-driven design, and more. The O’Reilly Software Architecture Conference covers the skills and tools every software architect needs. Use the code

BLOCKS during registration to get 20% off of most passes. - Educative.io – Level up your coding skills, quickly and efficiently. Visit educative.io/codingblocks to get 20% off any course.

Survey Says … News - Thank you to everyone that left us a review:

- iTunes: codeand40k, buckrivard

- Stitcher: Jediknightluke, nmolina

Factor Tres – Async Serverless The first two factors, realtime GraphQL and reliable eventing, provide the foundation for a decoupled architecture that paves the way for the third factor: async serverless. These serverless processes meet two properties: - Idempotency: Events are delivered at least once.

- Out of order messaging: The order the events are received is not guaranteed.

Traditional vs 3factor app | Traditional application | 3factor application | | Synchronous procedure code. | Loosely coupled event handlers. | | Deployed on VMs or containers. | Deployed on serverless platforms. | | You manage the platform. | The platform is managed for you. | | Requires operational expertise. | No operational expertise necessary. | | Auto-scale when possible. | Auto-scales by default. | Benefits of serverless architectures - No-ops: no run time to manage.

- Free scale: scales based on utilization.

- Cost: you pay for utilization.

Sample serverless providers When to use the 3 Factor app? - Multiple subsystems that need to subscribe to the same events.

- Low latency events.

- Complex event processing.

- High volume, velocity data.

Benefits - Producers and consumers are decoupled.

- No point-to point-integrations.

- Consumers can respond to events immediately as they arrive.

- Highly scalable and distributed.

- Subsystems have independent views of the event stream.

Challenges - Guaranteed event delivery.

- Processing events in order.

- Processing events exactly once.

- Latency related to initial serverless start up time.

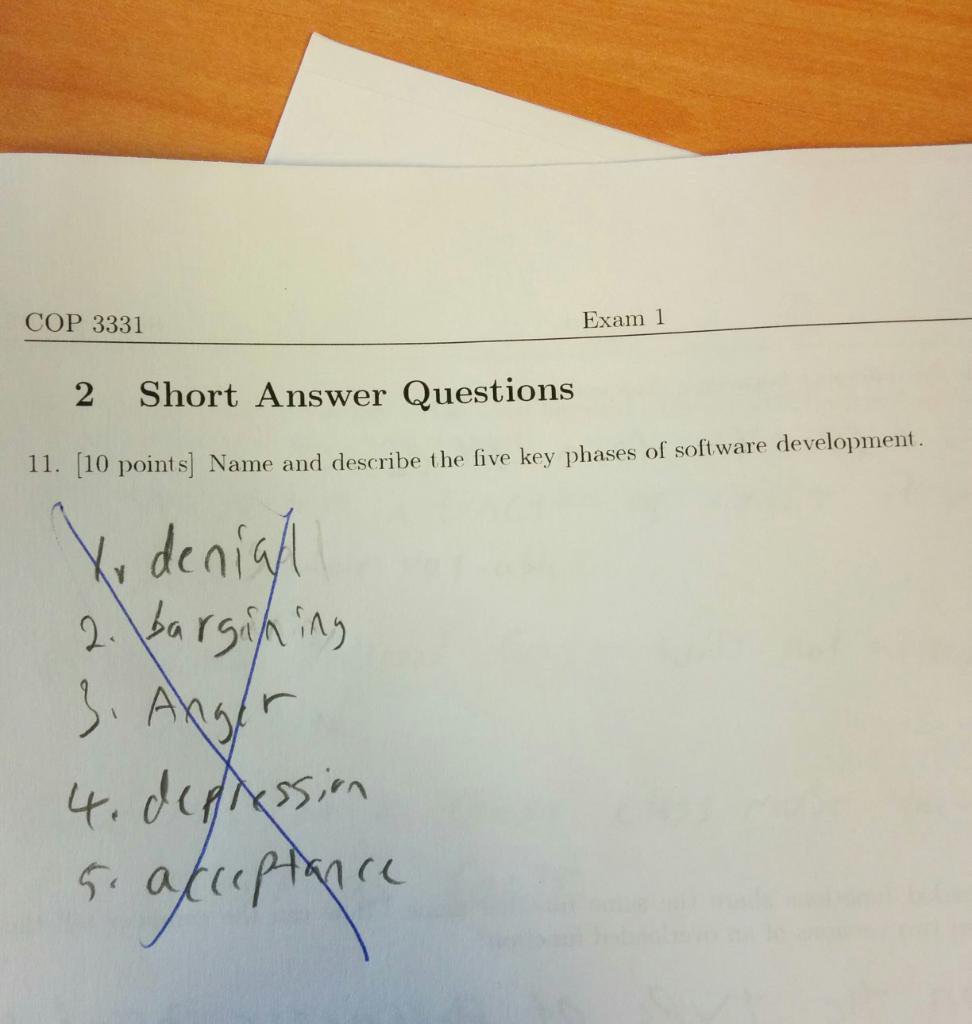

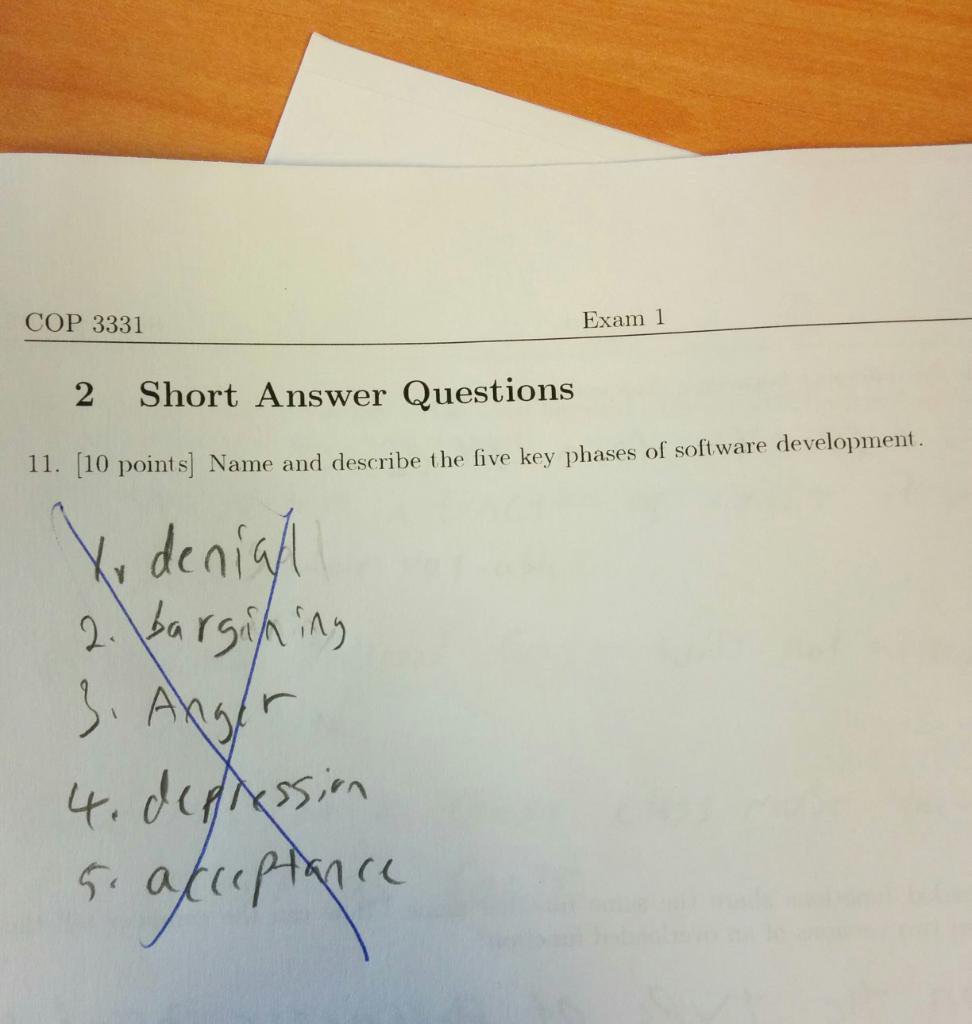

The five key phases of software development. Resources We Like Tip of the Week - Keep your email address private in your GitHub repo’s git log by setting your email address to

github_username@users.noreply.github.com like git config user.email janedoe@users.noreply.github.com. (GitHub) - Darknet Diaries: True stories from the dark side of the Internet (darknetdiaries.com)

- ARM Template Viewer for VS Code displays a graphical preview of Azure Resource Manager (ARM) templates. (marketplace.visualstudio.com)

- WSL Support Framework for IntelliJ and RubyMine (plugins.jetbrains.com)

- Visual Studio Code Remote – WSL extension lets you use the Windows Subsystem for Linux as your development environment within VS Code. (code.visualstudio.com)

- What is Azure Data Studio? (docs.microsoft.com)

- The DevOps Handbook is available on Audible! (Audible, Amazon)

Direct download: coding-blocks-episode-117.mp3

Category: Software Development

-- posted at: 10:50pm EDT

|

|

Sun, 29 September 2019

We discuss the second factor of Hasura’s 3factor app, Reliable Eventing, as Allen says he still _surfs_ the Internet (but really, does he?), it’s never too late for pizza according to Joe, and Michael wants to un-hear things. This episode’s full show notes can be found at https://www.codingblocks.net/episode116, just in case you’re using your podcast player to read this. Sponsors Survey Says … What's the first thing you do when picking up a new technology or stack? News - Thank you to everyone that left us a review:

- iTunes: !theBestCoder, guacamoly, Fishslider

- Stitcher: SpottieDog

- We have photographic evidence that we were in the same room with Beej from Complete Developer at Atlanta Code Camp.

The Second Factor – Reliable Eventing - Don’t allow for mutable state. Get rid of in memory state manipulation in APIs.

- Persist everything in atomic events.

- The event system should have the following characteristics:

- Atomic – the entire operation must succeed and be isolated from other operations.

- Reliable – events should be delivered to subscribers at least once.

Comparing the 3factor app Eventing to Traditional Transactions | Traditional application | 3factor application | | Request is issued, data loaded from various storage areas, apply business logic, and finally commit the data to storage. | Request is issued and all events are stored individually. | | Avoid using async features because it’s difficult to rollback when there are problems. | Due to the use of the event system, async operations are much easier to implement. | | Have to implement custom recovery logic to rewind the business logic. | Recovery logic isn’t required since the events are atomic. | Benefits of an Immutable Event Log - Primary benefit is simplicity when dealing with recovery. There’s no custom business logic because all the event data is available for replayability.

- Due to the nature of persisting the individual event data, you have a built in audit trail, without the need for additional logging.

- Replicating the application is as simple as taking the events and replaying the business logic on top of them.

Downsides of the Immutable Event Log - Information isn’t (instantly) queryable, not taking into account snapshotting.

- CQRS (command query responsibility segregation) helps to answer this particular problem.

- Forcing event sourcing on every part of the system introduces significant complexity where it may not be needed.

- For evolving applications, changing business needs require changes to the event schema and this can become very complex and difficult to maintain.

- Upcasting: converting an event record on the fly to reflect a newer schema. Problem with this is you’ve now defeated the purpose of immutable events.

- Lazy upcasting is evolving the event records over time, but that means you’re now maintaining code that knows how to understand each version of the event records in the system, making it very difficult to maintain.

- Converting the entire set of data any time a schema needs to change. Keeps things in sync but at a potentially large cost of taking the hit to update everything.

- Considerations of event granularity, i.e. how many isolated events are too much and how few are not enough?

- Too many and there won’t be enough information attached to the event to be meaningful and useful.

- Too few and you take a major hit on serialization/deserialization and you run the risk of not having any domain value.

- So what’s the best granularity? Keep the events closely tied to the DDD intent.

- Fixing bugs in the system may be quite a bit more difficult than a simple update query in a database because events are supposed to be immutable.

Resources We Like Tip of the Week - Use

docker system to manage your Docker environment. - Use

docker system df to see the the disk usage. - Use

docker system prune to clean up your environment. - How To View Clipboard History On Windows 10 (AddictiveTips.com)

- Use

docker-compose down -v to also remove the volumes when stopping your containers. - Intel 660p M.2 2TB NVMe PCIe SSD (Amazon)

- Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems (Amazon)

Direct download: coding-blocks-episode-116.mp3

Category: Software Development

-- posted at: 10:53pm EDT

|

|

Mon, 16 September 2019

We begin to twitch as we review the first factor of Hasura’s 3factor app, Realtime GraphQL, while Allen gets distrac … SQUIRREL!, Michael might own some bell bottoms, and Joe is stuck with cobalt. If you’re reading these notes via your podcast app, you can find this episode’s full show notes and join in on the conversation at https://www.codingblocks.net/episode115. Sponsors - Datadog.com/codingblocks – Sign up today for a free 14 day trial and get a free Datadog t-shirt after creating your first dashboard.

- O’Reilly Software Architecture Conference – Microservices, domain-driven design, and more. The O’Reilly Software Architecture Conference covers the skills and tools every software architect needs. Use the code

BLOCKS during registration to get 20% off of most passes. - Educative.io – Level up your coding skills, quickly and efficiently. Visit educative.io/codingblocks to get 20% off any course.

Survey Says … Would you be interested in doing a Coding Blocks Fantasy Football League? Take the survey here: https://www.codingblocks.net/episode115. News - To everyone that took a moment to leave us a review, thank you. We really appreciate it.

- iTunes: Zj, Who farted? Not me., Markus Johansson, this jus10, siftycat, Runs-With-Scissors

- Stitcher: wuddadid, unclescooter

- Zach Ingbretsen gives us a Vim tutorial: RAW Vim Workshop/Tutorial (YouTube)

3factor app and the First Factor 3factor app - The 3factor app is a modern architecture for full stack applications, described by the folks at Hasura.

- High feature velocity and scalability from the start:

- Real time GraphQL

- Reliable eventing

- Async serverless

- Kinda boils down to …

- Have an API gateway (for them, GraphQL).

- Store state in a (most likely distributed) store.

- Have services interact with state via an event system.

- Versus how did we used to do things using a REST API for each individual entity.

- Let’s be honest though. We probably created a single very specialized REST API for a particular page all in the name of performance. But it was only used for that page.

- Related technologies:

- Web Sockets

- Serverless

- Lambda / Kappa – Types Streaming architectures

- Event based architectures

- Microservices

Factor 1 – Realtime GraphQL Use Realtime GraphQL as the Data API Layer - Must be low-latency.

- Less than 100 ms is ideal.

- Must support subscriptions.

- Allows the application to consume information from the GraphQL API in real-time.

Some Comparisons to Typical Backend API Calls | Traditional application | 3factor application | | Uses REST calls. | Uses GraphQL API. | | May require multiple calls to retrieve all data (customer, order, order details) – OR a complex purpose built call that will return all three in one call. | Uses GraphQL query to return data needed in a single call defined by the caller. | | Uses something like Swagger to generate API documentation. | GraphQL will auto-generate entire schema and related documents. | | For realtime you’ll set up WebSocket based APIs. | Use GraphQL’s native subscriptions. | | Continuously poll backend for updates. | Use GraphQL’s event based subscriptions to receive updates. | Major Benefits of GraphQL - Massively accelerates front-end development speed because developers can get the data they want without any need to build additional APIs.

- GraphQL APIs are strongly typed.

- Don’t need to maintain additional documenting tools. Using a UI like GraphiQL, you can explore data by writing queries with an Intellisense like auto-complete experience.

- Realtime built in natively.

- Prevents over-fetching. Sorta. To the client, yes. Not necessarily so though on the server side.

A Little More About GraphQL - GraphQL is a Query Language for your API.

- It isn’t tied to any particular database or storage engine.

- It’s backed by your existing code and data.

- Queries are all about asking for specific fields on objects.

- The shape of your query will match the shape of the results.

- Queries allow for traversing relationships, so you can get all the data you need in a single request.

- Every field and nested object has its own set of arguments that can be passed.

- Many types are supported, including enums.

- Aliases

- GraphQL has the ability to alias fields to return multiple results of the same type but with different return names (think of aliasing tables in a database query).

- Fragments

- Fragments allow you to save a set of query fields to retrieve, allowing you to later reuse those fragments in simpler queries. This allows you to create complex queries with a much smaller syntax.

- There’s even the ability to use variables within the fragments for further queries requiring more flexibility.

- Operations

- Three types of operations are supported: query, mutation, and subscription.

- Providing an operation name is not required, except for multi-operation documents, but is recommended to aid debugging and server side logging.

- Variables

- Queries are typically dynamic by way of variables.

- Supported variable types are scalars, enums, and input object types.

- Input object types must map to server defined objects.

- Can be optional or required.

- Default values are supported.

- Using variables, you can shape the results of a query.

- Mutations

- Mutations allow for modifying data.

- Nesting objects allows you to return data after the mutation occurs,

- Mutations, unlike queries, run sequentially, meaning

mutation1 will finish before mutation2 runs. - In contrast, queries run in parallel.

- Meta fields

- GraphQL also provides meta fields that you can use to inspect the schema that are part of the introspection system.

- These meta fields are preceded by a double underscore, like

__schema or __typename. - GraphQL schema language

- Objects are the building blocks of a schema.

- Fields are properties that are available on the object.

- Field return types are defined as well – scalar, enum or objects.

- Scalar types: Int, Float, String Boolean, ID (special use case), or User Defined – must specify serializer, deserializer and validator.

- Fields can also be defined as non-nullable with an exclamation after the type like

String!. - This can be done on array types as well after the square brackets to indicate that an array will always be returned, with zero or more elements, like

[String]!. - Each field can have zero or more arguments and those arguments can have default values.

- Lists are supported by using square brackets.

- GraphQL’s type system supports interfaces.

- Complex objects can also be passed as input types, however, they are defined as

input rather than type. Resources We Like Tip of the Week - From Google’s Engineering Practices documentation: How to do a code review (GitHub.io).

- This is part of the larger Google Engineering Practices Documentation (GitHub.io).

- Use

CTRL+SHIFT+V to access Visual Studio’s Clipboard Ring. - Take control of your tab usage in your browser with Workona.

- Theme your Chrome DevTools!

Direct download: coding-blocks-episode-115.mp3

Category: Software Development

-- posted at: 10:10pm EDT

|

|

Mon, 2 September 2019

We learn how to apply the concepts of The Pragmatic Programmer to teams while Michael uses his advertisement voice, Joe has a list, and Allen doesn't want anyone up in his Wheaties.

Direct download: coding-blocks-episode-114.mp3

Category: Software Development

-- posted at: 8:01pm EDT

|

|

Mon, 19 August 2019

After 112 episodes, Michael can’t introduce the show, Allen pronounces it “ma-meee”, and don’t make Joe run your janky tests as The Pragmatic Programmer teaches us how we should use exceptions and program deliberately. How are you reading this? If you answered via your podcast player, you can find this episode’s full show notes and join the conversation at https://www.codingblocks.net/episode113. Sponsors - Datadog.com/codingblocks – Sign up today for a free 14 day trial and get a free Datadog t-shirt after creating your first dashboard.

Survey Says … When you want to bring in a new technology or take a new approach when implementing something new or add to the tech stack, do you ...? Take the survey here:

https://www.codingblocks.net/episode113 News - Thank you for taking a moment out of your day to leave us a review.

- iTunes: MatteKarla, WinnerOfTheRaceCondition, michael_mancuso

- Stitcher: rundevcycle, Canmichaelpronouncethis, WinnerOfTheRaceCondition, C_Flat_Fella, UncleBobsNephew, alexUnique

- Autonomous ErgoChair 2 Review (YouTube)

- Come see us Saturday, September 14, 2019 at the Atlanta Code Camp 2019 (atlantacodecamp.com)

- Are they cakes, cookies, or biscuits? (Wikipedia)

Intentional Code When to use Exceptions - In an earlier chapter, Dead Programs Tell No Lies, the book recommends:

- Checking for every possible error.

- Favor crashing your program over running into an inconsistent state.

- This can get really ugly! Especially if you believe in the “one return at the bottom” methodology for your methods.

- You can accomplish the same thing by just catching an exception for a block of code, and throwing your own with additional information.

- This is nice, but it brings up the question? When should you return a failed status, and when should you throw an exception?

- Do you tend to throw more exceptions in one layer more than another, such as throwing more in your C# layer than your JS layer?

- The authors advise throwing exceptions for unexpected events.

- Ask yourself, will the code still work if I remove the exception handlers? If you answered “no”, then maybe your throwing exceptions for non-exceptional circumstances.

Tip 34 - Use exceptions for exceptional problems

Exceptions vs Error Handling - Should you throw an exception if you try to open a file, and it doesn’t exist?

- If it should be there, i.e. a config, yes, throw the exception.

- If it might be OK for it not to be there, i.e. you’re polling for a file to be created, then no, you should handle the error condition.

- Is it dangerous to rely on implicit exception throwing, i.e. opening a file that isn’t there?

- On the one hand, it’s cleaner without checking for the exceptions, but there’s no signaling to your co-coders that you did this intentionally.

- Exceptions are a kind of coupling because they break the normal input/output contract.

- Some languages / frameworks allow you to register error handlers that are outside the flow of the normal problem.

- This is great for certain types of problems, like serialization problems, particularly when there is a prescribed flow, such as error pages, serialization, or SSL errors.

Programming by Coincidence - What does it mean to “program by coincidence”?

- Getting lured into a false sense of security and then getting hit by what you were trying to avoid.

- Avoid programming by coincidence and instead program deliberately. Don’t rely on being lucky.

- Writing code and seeing that it works without fully understanding why is how you program by coincidence.

- This really becomes a problem when something goes wrong and you can’t figure out why because you never knew why it worked to start off with.

- We may not be innocent …

- What if you write code that adheres to some other code that was done in error … if that code is eventually fixed, your own code may fail.

- So if it’s working, why would you touch it?

- It might not actually be working …

- Maybe it doesn’t work with a different resolution.

- Undocumented code might change, thus changing your “luck”.

- Unnecessary method calls slow down the code.

- Those extra calls increase the risk of bugs.

- Write code that others implement with well documented code that adheres to a contract.

Accidents of Context - You can also make the mistake that you assume certain things are a given, such as that there’s a UI or that there’s a given language.

Implicit Assumptions - Don’t assume something, prove it.

- Assumptions that aren’t based on fact become a major sticking point in many cases.

Tip 44 - Don’t Program by Coincidence

How to Program Deliberately - Always be aware of what you’re doing.

- Don’t code blindfolded, Make sure you understand what you’re programming in, both business domain related and programming language.

- Code from a plan.

- Rely on reliable things. Don’t code based on assumptions.

- Document assumptions.

- Test your code _and_ your assumptions.

- Prioritize and spend time on the most important aspects first.

- Don’t let old code dictate new code. Be prepared to refactor if necessary.

Resources We Like - The Pragmatic Programmer by Andrew Hunt, David Thomas (Amazon)

- The Pragmatic Bookshelf (pragprog.com)

- Oh mother… | Family Feud (YouTube)

- OMG! It’s here! Oh mother… the ON-AIR VERSION!!! | Family Feud (YouTube)

- Thunder Talks (episode 87)

- Spotify engineering culture (part 1) (labs.spotify.com)

Tip of the Week

Direct download: coding-blocks-episode-113.mp3

Category: Software Development

-- posted at: 8:01pm EDT

|

|

Sun, 4 August 2019

We continue our dive into The Pragmatic Programmer and debate when is it text manipulation vs code generation as Joe can’t read his bill, Michael makes a painful recommendation, and Allen’s gaming lives up to Southern expectations. In case you’re reading these show notes via your podcast player, you can find this episode’s full show notes at https://www.codingblocks.net/episode112 and join in on the conversation. Sponsors - Clubhouse – The first project management platform for software development that brings everyone on every team together to build better products. Sign up for two free months of Clubhouse by visiting clubhouse.io/codingblocks.

- Datadog.com/codingblocks – Sign up today for a free 14 day trial and get a free Datadog t-shirt after creating your first dashboard.

Survey Says … News - We really appreciate every review we get. Thank you for taking the time.

- iTunes: AsIRoseOneMorn, MrBramme, MP7373, tbone189, BernieF1982, Davidwrpayne, mldennison

- Stitcher: Ben T, moreginger, Tomski, Java Joe

Blurring the Text Manipulation Line Text Manipulation - Programmers manipulate text the same way woodworkers shape wood.

- Text manipulation tools are like routers: noisy, messy, brutish.

- You can use text manipulation tools to trim the data into shape.

- Once you master them, they can provide an impressive amount of finesse.

- Alternative is to build a more polished tool, check it in, test it, etc.

Tip 28 - Learn a Text Manipulation Language.

Code Generators - When you have a repetitive task, why not generate it?

- The generated code takes away complexity and reduces errors.

- And it’s reuse has little to no additional cost.

Tip 29 - Write Code That Writes Code.

There are two types of code generators: - Passive code generators are run once (scaffolding).

- Active code generators are used each time they are required.

Passive code generators save typing by automating… - New files from a template, i.e. the “File -> New” experience.

- One off conversions (one language to another).

- These don’t need to be completely perfect.

- Producing lookup tables and other resources that are expensive to compute.

- Full-fledged source file.

You get to pick how accurate you want the generators to be. Maybe it writes 80% of the code for you and you do the rest by hand. Active code generators - Active code generators are necessary if you want to adhere to the DRY principle.

- This form is not considered duplication because it’s generated as needed by taking a single representation and converting it to all of the forms you need.

- Great for system boundaries (think databases or web services).

- Great for keeping things in sync.

- Recommend creating your own parser.

Why generate when you can just … program? - Scaffolding, so it’s a starting off point that you edit.

- Performance.

- System boundaries.

- Some uses work best when built into your build pipeline.

- Think about automatically generating code to match your DB at compile time, like a T4 generator for Entity Framework.

- It’s often easier to express the code to be generated in a language neutral representation so that it can be output in multiple languages.

- Something like System.CodeDom comes to mind.

- These generators don’t need to be complex.

- And the output doesn’t always need to be code. It could be XML, JSON, etc.

Resources We Like Tip of the Week - Within Visual Studio Code, after you use

CTRL+F to find some text in your current document, you can take it a step further by pressing ALT+ENTER to enter into block selection/edit mode. - Turn learning Vi into a game with VIM Adventures. (vim-adventures.com)

- Never confuse forward Slash with back Slash again.

Direct download: coding-blocks-episode-112.mp3

Category: Software Development

-- posted at: 8:01pm EDT

|

|

Mon, 22 July 2019

It's about time we finally learn how to debug by taking take a page from The Pragmatic Programmer playbook, while Michael replaces a developer's cheat sheet, Joe judges the H-O-R-S-E competition for VI, and Allen stabs you in the front.

Direct download: coding-blocks-episode-111.mp3

Category: Software Development

-- posted at: 10:21pm EDT

|

|

Sun, 7 July 2019

We dig into the details of the basic tools while continuing our journey into The Pragmatic Programmer while Joe programs by coincidence, Michael can't pronounce numbers, and Allen makes a point.

Direct download: coding-blocks-episode-110.mp3

Category: Software Development

-- posted at: 8:20pm EDT

|

|

Sun, 23 June 2019

Joe is distracted by all of the announcements from E3, Allen is on the run from the Feebs, and Michael counts debugging as coding. All this and more as we continue discussing The Pragmatic Programmer.

Direct download: coding-blocks-episode-109.mp3

Category: Software Development

-- posted at: 9:15pm EDT

|

|

Sun, 9 June 2019

The Pragmatic Programmer teaches us how to use tracer bullets versus prototyping while Joe doesn't know who won the Game of Thrones, Allen thought he knew about road numbers, and Michael thinks 475 is four letters.

Direct download: coding-blocks-episode-108.mp3

Category: Software Development

-- posted at: 9:24pm EDT

|

|

Sun, 26 May 2019

The dad jokes are back as we learn about orthogonal code while JZ (the 8-mile guy) has spaghetti on him, Michael's Harry Potter references fail, and Allen voice goes up a couple octaves.

Direct download: coding-blocks-episode-107.mp3

Category: Software Development

-- posted at: 8:01pm EDT

|

|

Sun, 12 May 2019

We take a deep dive into the various forms of duplication and jump aboard the complain train as Allen complains about Confluent's documentation, Michael complains about Docker's documentation, and Joe complains about curl.

Direct download: coding-blocks-episode-106.mp3

Category: Software Development

-- posted at: 11:01pm EDT

|

|

Sun, 28 April 2019

We begin our journey into the wisdom of The Pragmatic Programmer, which as Joe puts it, it's less about type-y type-y and more about think-y think-y, while Allen is not quite as pessimistic as Joe, and Michael can't wait to say his smart words.

Direct download: coding-blocks-episode-105.mp3

Category: Software Development

-- posted at: 8:01pm EDT

|

|

Sun, 14 April 2019

We dig into the nitty gritty details of what a Progressive Web App (PWA) is and why you should care, while Allen isn't sure if he is recording, Michael was the only one prepared to talk about Flo and the Progressive Price Gun, and Joe has to get his headphones.

Direct download: coding-blocks-episode-104.mp3

Category: Software Development

-- posted at: 8:01pm EDT

|

|

Sun, 31 March 2019

The Date deep dive continues as we focus in on C# and JavaScript, while Michael reminisces about the fluorescent crayons, Joe needs a new tip of the week, and Allen confuses time zones.

Direct download: coding-blocks-episode-103.mp3

Category: Software Development

-- posted at: 10:26pm EDT

|

|

Sun, 17 March 2019

We take a deep dive into understanding why all Date-s are not created equal while learning that Joe is not a fan of months, King Kong has nothing on Allen, and Michael still uses GETDATE. Oops.

Direct download: coding-blocks-episode-102.mp3

Category: Software Development

-- posted at: 11:35pm EDT

|

|

Sun, 3 March 2019

After being asked to quiet down, our friend, John Stone, joins us again as we move the conversation to the nearest cubicle while Michael reminds us of Bing, Joe regrets getting a cellphone, and Allen's accent might surprise you.

Direct download: coding-blocks-episode-101.mp3

Category: Software Development

-- posted at: 8:23pm EDT

|

|

Sun, 17 February 2019

We gather around the water cooler to celebrate our 100th episode with our friend John Stone for some random developer discussions as Michael goes off script, Joe needs his techno while coding, and Allen sings some sweet sounds.

Direct download: coding-blocks-episode-100.mp3

Category: Software Development

-- posted at: 8:01pm EDT

|

|

Sun, 3 February 2019

We learn all about JAMstack in real-time as Michael lowers the bar with new jokes, Allen submits a pull request, and Joe still owes us a tattoo.

Direct download: coding-blocks-episode-99.mp3

Category: Software Development

-- posted at: 8:01pm EDT

|

|

Mon, 21 January 2019

We dig into heaps and tries as Allen gives us an up to date movie review while Joe and Michael compare how the bands measure up.

Direct download: coding-blocks-episode-98.mp3

Category: Software Development

-- posted at: 1:16am EDT

|

|

Mon, 7 January 2019

We ring in 2019 with a discussion of various trees as Allen questions when should you abstract while Michael and Joe introduce us to the Groot Tree.

Direct download: coding-blocks-episode-97.mp3

Category: Software Development

-- posted at: 7:40pm EDT

|

|

The five key phases of software development.

The five key phases of software development.