Sun, 26 April 2020

We gather around the water cooler at 6 foot distances as Michael and Joe aren’t sure what they streamed, we finally learn who has the best fries, at least in the US, and Allen doesn’t understand evenly distributing your condiments. For those reading this via their podcast player, this episode’s full show notes can be found at https://www.codingblocks.net/episode131. Stop by and join in on the conversation. Survey Says Take the survey at: https://www.codingblocks.net/episode131. News - We really appreciate the latest reviews, so thank you!

- iTunes: Braver1996summer, eleneshector, Dis14Joe

- Stitcher: Nik P, Anonymous, Please HelP, Dis14Joe, thephdwasamistake

- Be on the lookout for live streams of Joe on YouTube or Twitch!

Heard Around the Water Cooler - COVID-19 Pushes Up Internet Use 70% And Streaming More Than 12%, First Figures Reveal (Forbes)

- Security at Zoom (Zoom)

- Joe has been busy live streaming (YouTube)

- Come learn Apache Drill with us! (YouTube)

- Cmder – Portable console emulator for Windows.

- We’re still learning the keyboard shortcuts.

- 30-Day LeetCoding Challenge (LeetCode.com)

- Coding Interview Tips, How to get better at technical interviews without practicing (InterviewCake.com)

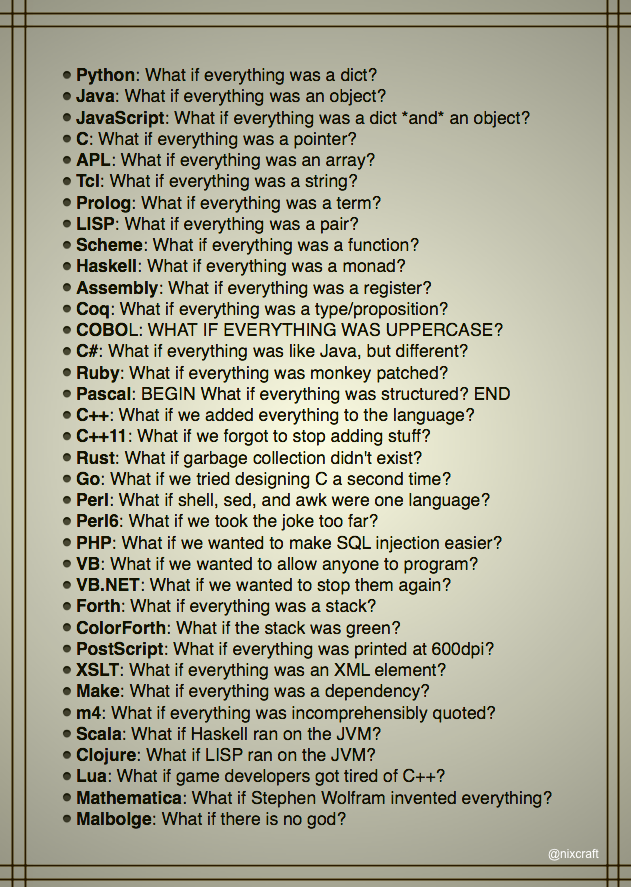

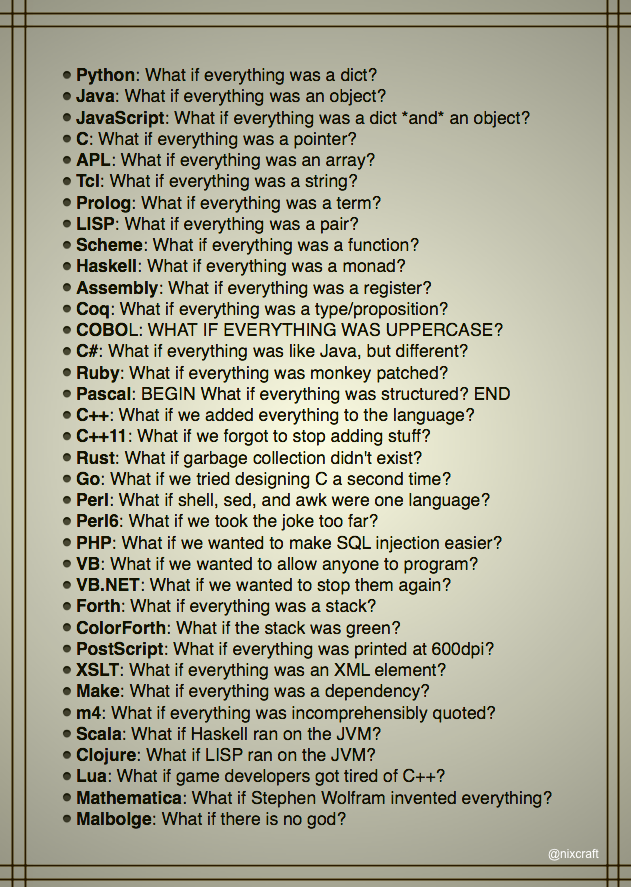

True descriptions of languages ( Reddit) - Allen upgrades to the AMD Ryzen 9 3900x

- AMD Ryzen 9 3900X 12-core, 24-thread CPU (Amazon)

- Asus TUF A15 laptop review: AMD’s Ryzen 4000 is a groundbreaking mobile CPU (Eurogamer.net)

- Big data has been on our minds lately.

Tip of the Week - Interested in COBOL, game development, and Dvorak keyboards? Check out Joe’s new favorite streamer Zorchenhimer. (Twitch)

- Using

helm uninstall doesn’t remove persistent volumes nor their claims. - After doing

helm uninstall RELEASE_NAME, delete the persistent volume claim using kubectl delete pvc PVC_NAME to remove the claim, which depending on the storage class and reclaim policy, will also remove the persistent volume. Otherwise, you’d need to manually remove the persistent volume using kubectl delete pv PV-NAME. - kafkacat – A generic non-JVM producer and consumer for Apache Kafka. (GitHub)

Direct download: coding-blocks-episode-131.mp3

Category: Software Development

-- posted at: 8:35pm EDT

|

Sun, 12 April 2020

We dig into the details of how databases use B-trees as we continue our discussion of Designing Data-Intensive Applications while Michael’s description of median is awful, live streaming isn’t for Allen, and Joe really wants to bring us back from the break. For those reading this via their podcast player, this episode’s full show notes can be found at https://www.codingblocks.net/episode130 in all their glory. Check it out, as Joe would say, and join the conversation. Sponsors - Datadog.com/codingblocks – Sign up today for a free 14 day trial and get a free Datadog t-shirt after install the agent.

Survey Says News - We really appreciate the latest reviews, so thank you!

- iTunes: Anips79, Jacobboyd23, LoadedGalaxy, JenT Avid Listener

- Pluralsight is free for the month of April! (Pluralsight)

- TechSmith is offering Snagit and Video Review for free through June 2020. (TechSmith)

- Remember when we gushed over Zoom?

- Zoom: Every security issue uncovered in the video chat app (CNET)

- Zoom Go Boom (TWiT)

- Maybe should use Jitsi instead of Zoom. (Jitsi)

- Be on the lookout for live streams of Joe on YouTube or Twitch!

B-Trees are Awesome - B-trees are the most commonly used indexing structure.

- Introduced in 1970, and called ubiquitous 10 years later.

- They are the implementation used by most relational database systems, as well as a number of non-relational DB’s.

- “Indexing” is the way databases store metadata about your data to make quick look ups.

- Like the SSTable, the B-tree stores key/value pairs sorted by key. This makes range query look ups quick.

- B-trees use fixed block sizes, referred to as pages, that are usually 4 KB in size which (generally) map well to the underlying hardware because disks are typically arranged in fixed block sizes.

- Every page has an address that can be referenced from other pages. These are pointers to positions on a disk.

- Knowing (or being able to quickly find) which page the data you are looking for is in, drastically cuts down on the amount of data you have to scan through.

- B-trees start with a root page. All key searches start here.

- This root will contain references to child pages based off of key ranges.

- The child pages might contain more references to other child pages based off of more narrowly focused key ranges.

- This continues until you reach the page that has the data for the key you searched for.

- These pages are called leaf pages, where the values live along with the key.

- The branching factor is the number of references to child pages in one page of a B-tree.

- The branching factor is tied to the space needed to store the page references and the range boundaries.

- The book states that it’s common to have a branching factor of several hundred, some even say low thousands!

- The higher the branching factor means the fewer levels you have to go through, i.e. less pages you have to scan, when looking for your data.

- Updating a value in a B-tree can be complicated.

- You search for the leaf node containing the key and then update the value and write it to disk.

- Assuming everything fits in the page, then none of the upstream references change and everything is still valid.

- If you are inserting a new key, you find the leaf node where the key should live based on the ranges and then you add the key and value there.

- Again, if everything fits in the page, then similar to the update, none of the upstream references need to change.

- However, if the key/value would exceed the size of the page, the page is split into two half-pages, and the parent page’s references are updated to point to the new pages.

- This update to the parent page might require it to also be split.

- And this update/split pattern might continue up to and including the root page.

- By splitting the pages into halves as data is added that exceeds the page size, this keeps the tree balanced.

- A balanced tree is the secret to consistent lookup times.

- It terms of big-O, a B-tree with n keys has a depth of O(log n).

- Most DB’s only go 3 to 4 levels deep.

- A tree with four levels, using a 4 KB page size, and a branching factor of 500 can store up to 256 TB!

Making B-Trees Reliable - The main notion is that writes in a B-tree occur in the same location as the original page, that way no references have to change, assuming the page size isn’t exceeded.

- Think of this as a hardware operation.

- These actually map to spinning drives better than SSD’s. SSD’s must rewrite large blocks of a storage chip at a time.

- Because some operations require multiple pages to be written, in the case of splitting full pages and updating the parent, it can be dangerous because if there is a DB crash at any point during the writing of the pages, you can end up with orphaned pages.

- To combat this, implementations usually include a write-ahead log (WAL, aka a redo log).

- This is an append-only file where all modifications go before the tree is updated.

- If the database crashes, this file is read first and used to put the DB back in a good, consistent state.

- Another issue is that of concurrency.

- Multiple threads reading and writing to the B-tree at the same time could read things that would be in an inconsistent state.

- In order to counter this problem, latches, or lightweight locks, are typically used.

B-Tree Optimizations - Some databases use a copy-on-write scheme. This alleviates the need to write to an append only log like previously mentioned and instead you write each updated page to a new location including updated parents that point to it.

- In some cases, abbreviated keys can be stored which saves space and would allow for more branching but fewer node levels, which is fewer hops to get to the leaf nodes.

- This is technically a B+ tree.

- Some implementations attempt to keep leaf pages next to each other in sequential order which would improve the seek speed to the data.

- Some implementations keep additional pointers, such as references to the previous and next sibling pages so it’s quicker to scan without having to go back to the parent to find the pointer to those same nodes.

- Variants like fractal trees, use tactics from log-structured ideas to reduce disk seeks.

Comparing B-Trees and LSM-Trees - B-trees are much more common and mature. We’ve ironed out the kinks and we understand the ways people use RDBMSes.

- LSM-trees are typically faster for writes.

- B-trees are typically faster for reads because LSM-trees have to check multiple data-structures, including SSTables that might be at different levels of compaction.

- Use cases vary, so benchmarking your use cases are important.

LSM-Tree Advantages - The write amplification problem:

- B-trees must write all data at least twice, once to the WAL and another to the page (and again if pages are split). Some storage engines go even further for redundancy.

- LSM-trees also rewrite data, due to compaction and tree/SSTable merging.

- This is particularly a problem for SSDs, which don’t do so well with repeated writes to the same segment.

- LSM-trees typically have better sustained write throughput because they have lower write amplification and because of they generally sequentially write the SSTable files, which is particularly important on HDDs.

- LSM-trees can be compressed better, and involve less space on disk.

- LSM-trees also have lower fragmentation on writes.

LSM-Tree Downsides - Compaction of the SSTables can affect performance, even though the compaction can happen in another thread, because takes up disk I/O resources, i.e. the disk has a finite amount of I/O bandwidth.

- It’s possible that the compaction can not be keep up with incoming events, causing you to run out of disk space, which also slows down reads as more SSTable files need to be read.

- This problem is magnified in a LSM-tree because a key can exist multiple times (before compaction) unlike B-trees which have just one location for a given key.

- The B-tree method for updating also makes it easier for B-trees to guarantee transactional isolation.

Resources We Like - Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems by Martin Kleppmann (Amazon)

- Data Structures – (some) Trees (episode 97)

- B-Tree Visualization (USFCA)

- SQL Server Transaction Log Architecture and Management Guide (docs.microsoft.com)

- Log-structured merge-tree (Wikipedia)

- Postgres Indexes Under the Hood (rcoh.me)

- Is Docker the new Git? (Coding Blocks)

Tip of the Week - Chocolatey adds a PowerShell command

Update-SessionEnvironment or refreshenv for short, that you can use to update the environment variables in your current PowerShell session, much like . $HOME/.profile for MacOS/Linux. (Chocolatey) - Use

docker stats to monitor the usage of your running Docker containers. It’s like top for Docker. (Docker) - Click the Equivalent REST or command line link at the bottom of the Google Cloud Console to get the equivalent as a command you can script and iterate on.

- Jupyter has a Docker image for you: Jupyter Docker Stats. (jupter-docker-stacks.readthedocs.io)

- Apache Drill is an amazing schema-free SQL query engine for Hadoop, NoSQL, and Cloud Storage. (drill.apache.org)

- Presto, aka Presto DB, not to be confused with Presto SQL, is distributed SQL query engine for big data originally developed by Facebook. (prestodb.io)

Direct download: coding-blocks-episode-130.mp3

Category: Software Development

-- posted at: 9:04pm EDT

|

True descriptions of languages (Reddit)

True descriptions of languages (Reddit)